William Brannon

I’m a researcher in AI and machine learning, working on LLMs, data-centric AI, and computational methods for understanding how people form and change their opinions. I recently completed my PhD at MIT, where my work combined machine learning, causal inference, and computational social science.

My research has three main threads:

- Data-centric AI: Large language models, the role and provenance of training data, and how we evaluate models in light of their data. This includes serving as one of the leads of the Data Provenance Initiative, which audits AI training datasets and builds tools for licensing, consent, and transparency.

- Causal AI: Developing ML-based methods for causal inference and treatment effect estimation, especially using LLMs to estimate heterogeneous treatment effects in randomized experiments. Most recently, I’ve applied these methods to persuasion experiments, using LLMs as opinion models to simulate opinion change across different audiences.

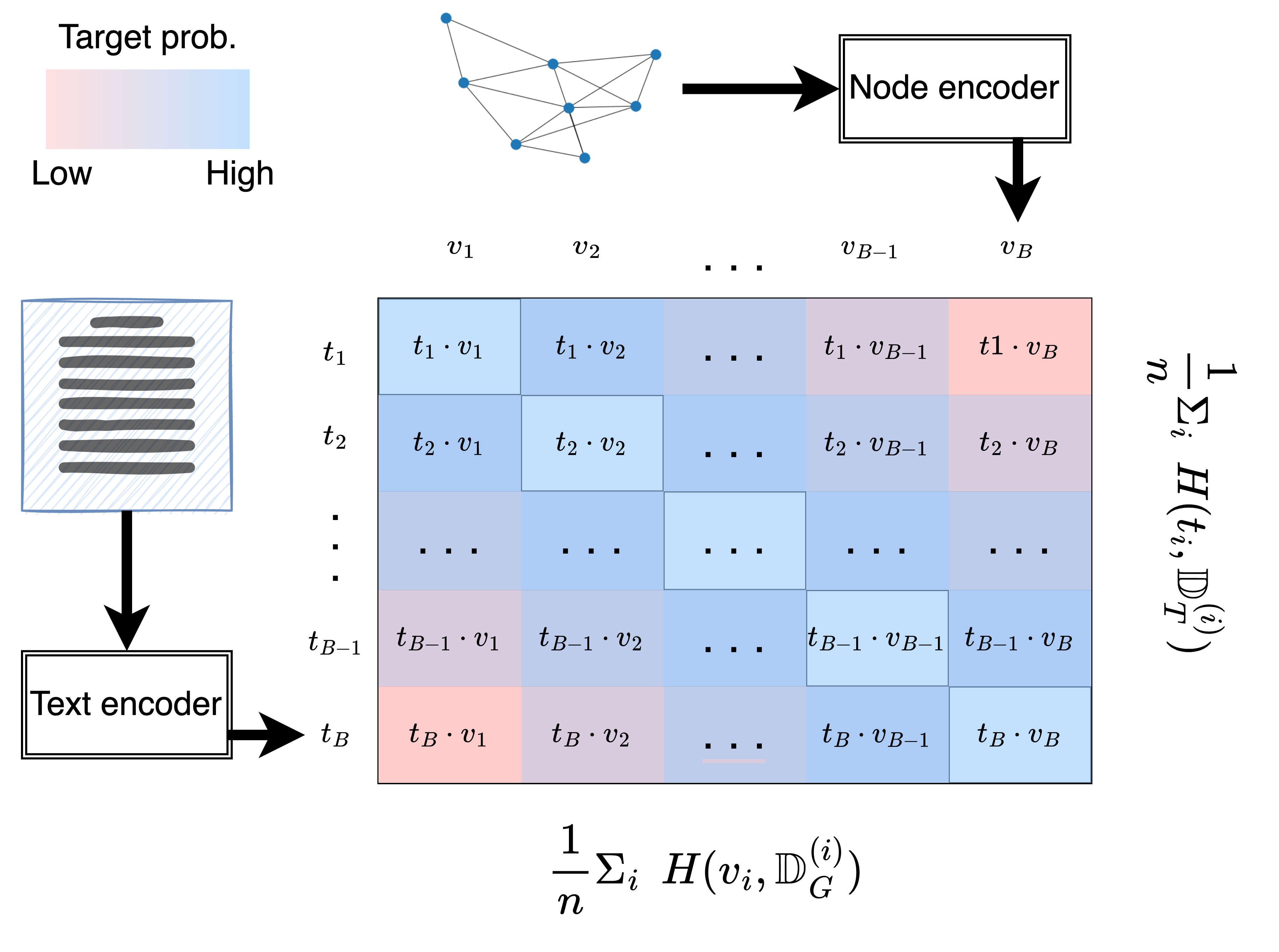

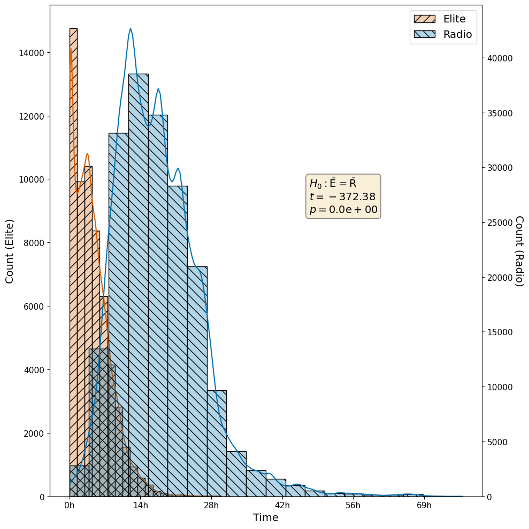

- Computational social science: Persuasion, media ecosystems, and social networks. I’ve worked on news-cycle dynamics across media platforms, graph-aware architectures and training objectives for social network data, and LLM-powered tools like AudienceView and the Bridging Dictionary to help journalists interpret audience feedback and politically charged language.

Methodologically, I work with LLM post-training and inference techniques, randomized experiments and other causal-inference tools, and representation learning methods for text and network data. This work uses modern Python ML stacks (PyTorch, Hugging Face) on cloud and HPC infrastructure. Earlier in my career, I spent several years as a data scientist in U.S. politics (at the DNC, the 2012 presidential campaign, and the Analyst Institute), which still shapes how I think about experimentation, stakeholders, and deployed models.

If you’re interested in these areas and want to chat about collaborations or research/ML roles, please get in touch! You can reach me by email: will.brannon@gmail.com. For more info, you can also download my CV or check out my publications.

news

| May 13, 2025 | I successfully defended my PhD dissertation, “Language Models as Opinion Models: Techniques and Applications,” earlier today! The dissertation is not available online yet, but it and preprint versions of new work in it will be shortly. |

|---|---|

| Apr 26, 2025 | Our paper “Bridging the Data Provenance Gap Across Text, Speech and Video” appeared today at ICLR 2025! |

| Jan 22, 2025 | ICLR 2025 has accepted our new paper “Bridging the Data Provenance Gap Across Text, Speech and Video”! This paper is the third phase of work in the Data Provenance Initiative. |

| Dec 11, 2024 | The latest Data Provenance Initiative paper, “Consent in Crisis: The Rapid Decline of the AI Data Commons”, appeared today at NeurIPS 2024. |

| Nov 12, 2024 | Our paper “On the Relationship between Truth and Political Bias in Language Models” appeared as a main-conference poster at EMNLP 2024! |